Fending Off Automated Imitation: Nightshade's Protocols for Protecting Art

Fending Off Automated Imitation: Nightshade’s Protocols for Protecting Art

Quick Links

Key Takeaways

- Nightshade is an AI tool that “poisons” digital art, making it unusable for training AI models.

- The tool alters digital artwork in a way that appears unchanged to humans but confuses AI algorithms.

- Nightshade provides a way for digital creators to protect their work from being used in AI datasets without consent.

MUO VIDEO OF THE DAY

SCROLL TO CONTINUE WITH CONTENT

AI tools are revolutionary and can now hold conversations, generate human-like text, and create images based on a single word. However, the training data these AI tools use often comes from copyrighted sources, especially when it comes to text-to-image generators like DALL-E, Midjourney, and others.

Stopping generative AI tools using copyright images to train is difficult, and artists from all walks of life have struggled to protect their work from AI training datasets. But now, that’s all changing with the advent of Nightshade, a free AI tool built to poison the output of generative AI tools—and finally let artists take some power back.

What Is AI Poisoning?

AI poisoning is the act of “poisoning” the training dataset of an AI algorithm. This is similar to providing wrong information to the AI on purpose, resulting in the trained AI malfunctioning or failing to detect an image. Tools like Nightshade alter the pixels in a digital image in such a manner that it appears to be completely different to the AI training on it, but largely unchanged from the original to the human eye.

Thongden Studio/Shutterstock

For example, if you upload a poisoned image of a car to the internet, it will look the same to us humans, but an AI attempting to train itself to identify cars by looking at images of cars on the internet will see something completely different.

A large enough sample size of these fake or poisoned images in an AI’s training data can damage its ability to generate accurate images from a given prompt as the AI’s understanding of the object is compromised.

There are still a few questions on what the future holds for Generative AI , but protecting original digital work is a definite priority. This can even damage future iterations of the model as the training data upon which the model’s foundation is built isn’t 100% correct.

Using this technique, digital creators who do not consent for their images to be used in AI datasets can protect them from being fed to generative AI without permission . Some platforms provide creators the option to opt out of including their artwork in AI training datasets. However, such opt-out lists have been disregarded by AI model trainers in the past and continue to be disregarded with little to no consequence.

Compared to other digital artwork protection tools like Glaze, Nightshade is offensive. Glaze prevents AI algorithms from mimicking the style of a particular image, while Nightshade changes the image’s appearance to the AI. Both tools are built by Ben Zhao, Professor of Computer Science at the University of Chicago.

How to Use Nightshade

While the creator of the tool recommends Nightshade be used alongside Glaze, it can also be used as a standalone tool to protect your artwork. Using the tool is also fairly easy, considering there are only three steps to protecting your images with Nightshade.

However, there are a few things you need to keep in mind before getting started.

- Nightshade is only available for Windows and MacOS with limited GPU support and a minimum of 4GB VRAM required. Non-Nvidia GPUs and Intel Macs aren’t supported at the moment. Here’s a list of supported Nvidia GPUs according to the Nightshade team (the GTX and RTX GPUs are found in the “CUDA-Enabled GeForce and TITAN Products” section). Alternatively, you can run Nightshade on your CPU, but it’ll result in slower performance.

- If you have a GTX 1660, 1650, or 1550, a bug in the PyTorch library can prevent you from launching or using Nightshade properly. The team behind Nightshade may fix it in the future by moving from PyTorch to Tensorflow, but there are no workarounds at the moment. The issue also extends to the Ti variants of these cards. I launched the program by providing administrator access on my Windows 11 PC and waiting a few minutes for it to open. Your mileage may vary.

- If your artwork has lots of solid shapes or backgrounds, you may experience some artifacts. This can be countered by using a lower intensity of “poisoning.”

As far as protecting your images with Nightshade goes, here’s what you need to do. Keep in mind that this guide uses the Windows version, but these steps also apply to the macOS version.

- Download the Windows or macOS version from the Nightshade download page .

- Nightshade downloads as an archived folder with no installation required. Once the download is complete, extract the ZIP folder and double-click Nightshade.exe to run the program.

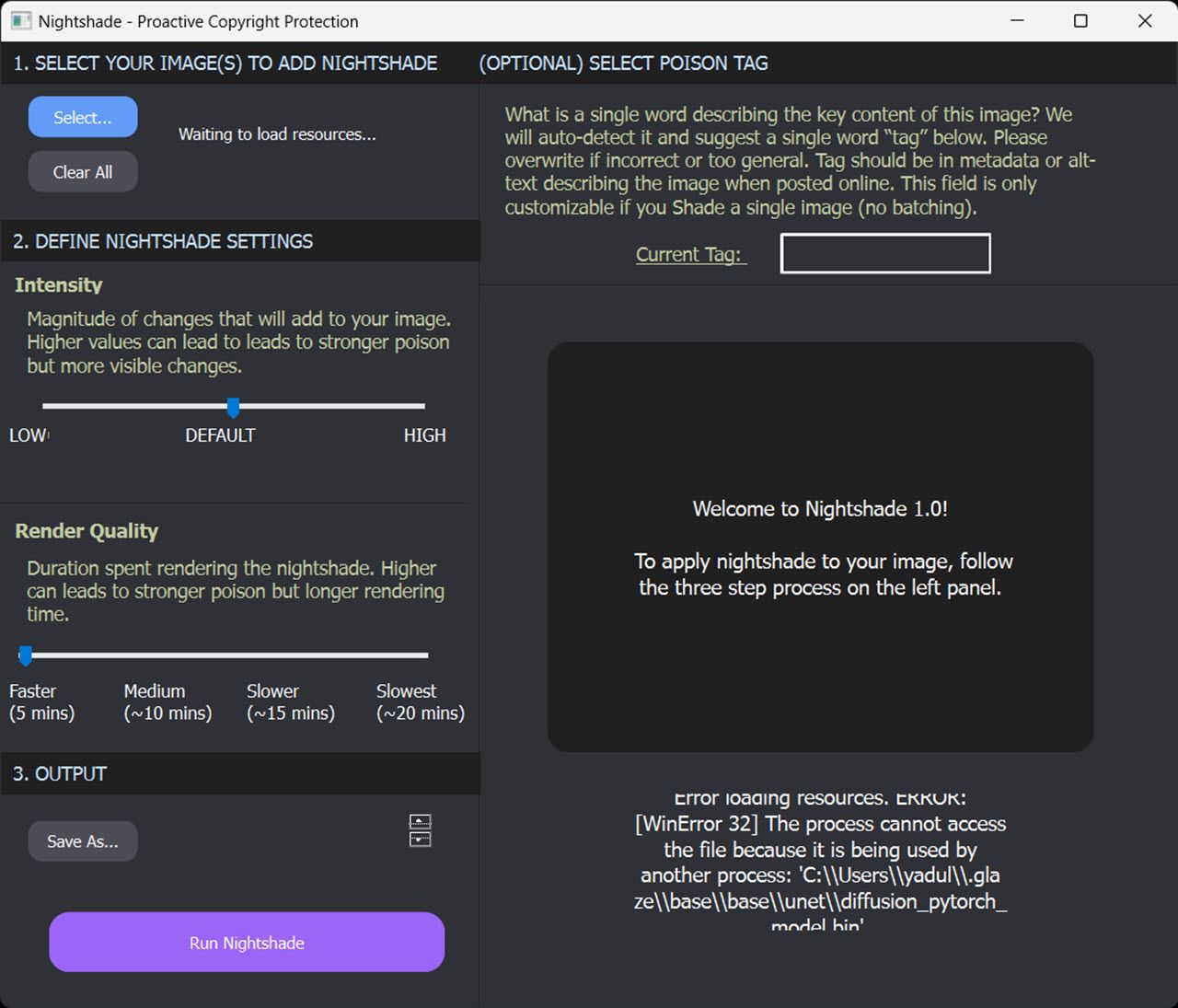

- Select the image you want to protect by clicking the Select button in the top-left. You can also select multiple images at once for batch processing.

- Adjust the Intensity and Render Quality dials according to your preferences. Higher values add stronger poisoning but can also introduce artifacts in the output image.

- Next, click the Save As button under the Output section to select a destination for the output file.

- Click the Run Nightshade button at the bottom to run the program and poison your images.

Optionally, you can also select a poison tag. Nightshade will automatically detect and suggest a single word tag if you don’t, but you can change it if it’s incorrect or too general. Keep in mind this setting is only available when you process a single image in Nightshade.

If all goes well, you should get an image that looks identical to the original one to the human eye but completely different to an AI algorithm—protecting your artwork from generative AI.

Also read:

- [Updated] Social Media Strategies Mastering IG's Most Trending Hashtags

- 2023 | How to Share Twitter Videos on Facebook, In 2024

- Android's Playbook for Rotating and Joining Videography for 2024

- ChatGPT's Personalized Command Feature Explained

- Comparative Analysis: GPT-3.5's Quickness Vs. ChatGPT-4's Sluggishness

- Decluttering Made Easy: How a Single Trick Can Transform Your Inbox and Eliminate Complexity | ZDNET

- Dissecting the Effects of Restrictions in Digital Chat Companions

- How To Unlock a Nubia Red Magic 8S Pro+ Easily?

- In 2024, Here are Some Pro Tips for Pokemon Go PvP Battles On Samsung Galaxy S24 | Dr.fone

- In 2024, How to Bypass Android Lock Screen Using Emergency Call On Samsung Galaxy S24 Ultra?

- In 2024, Supreme Reconciliation of VR Realms

- Key Blunders to Steer Clear of with GPT Conversations

- Premium Headsets for Next-Gen Drone Pilots for 2024

- The Trust Factor: Evaluating ChatGPT and Bard's Advice Quality

- Transforming Professional Routines: Harnessing the Potential of ChatGPT Assistance

- Ultimate guide to get the meltan box pokemon go For Tecno Camon 20 Pro 5G | Dr.fone

- Why Unquestioned Faith in AI Is a Path We Must Caution Against

- Title: Fending Off Automated Imitation: Nightshade's Protocols for Protecting Art

- Author: Brian

- Created at : 2025-01-31 09:25:40

- Updated at : 2025-02-01 12:10:24

- Link: https://tech-savvy.techidaily.com/fending-off-automated-imitation-nightshades-protocols-for-protecting-art/

- License: This work is licensed under CC BY-NC-SA 4.0.