Privacy Paradox: Decoding the Top 3 Bot Security Warnings

Privacy Paradox: Decoding the Top 3 Bot Security Warnings

Chatbots have been around for years, but the rise of large language models, such as ChatGPT and Google Bard, has given the chatbot industry a new lease of life.

MUO VIDEO OF THE DAY

SCROLL TO CONTINUE WITH CONTENT

Millions of people now use AI chatbots worldwide, but there are some important privacy risks and concerns to keep in mind if you want to try out one of these tools.

Disclaimer: This post includes affiliate links

If you click on a link and make a purchase, I may receive a commission at no extra cost to you.

1. Data Collection

Most people don’t use chatbots just to say hi. Modern chatbots are designed to process and respond to complex questions and requests, with users often including a lot of information in their prompts. Even if you’re only asking a simple question, you don’t really want it to go beyond your conversation.

According to OpenAI’s support section , you can delete ChatGPT chat logs whenever you desire, and those logs will then be permanently expunged from OpenAI’s systems after 30 days. However, the company will retain and review certain chat logs if they’ve been flagged for harmful or inappropriate content.

Another popular AI chatbot, Claude, also keeps track of your previous conversations. Anthropic’s support center states that Claude tracks “your prompts and outputs in the product to provide you with a consistent product experience over time in accordance with your controls.” You can delete your conversations with Claude, so it forgets what you’ve been talking about, but this doesn’t mean Anthropic will immediately delete your logs from its systems.

This, of course, begs the question: is my data being kept or not? Does ChatGPT or other chatbots use my data?

But the concerns don’t stop here.

How Does ChatGPT Learn?

In order to provide information, large language models are trained with huge amounts of data. According to Science Focus , ChatGPT-4 alone was fed 300 billion words of information during its training period. This isn’t taken straight from a few encyclopedias. Rather, chatbot developers use masses of information from the internet to train their models. This can include data from books, movies, articles, Wikipedia entries, blog posts, comments, and even review sites.

Keep in mind that, depending on a chatbot developer’s privacy policy, some of the aforementioned sources may not be used in training.

Many have criticized ChatGPT, claiming that it is something of a nightmare in terms of privacy, that ChatGPT cannot be trusted . So, why is this the case?

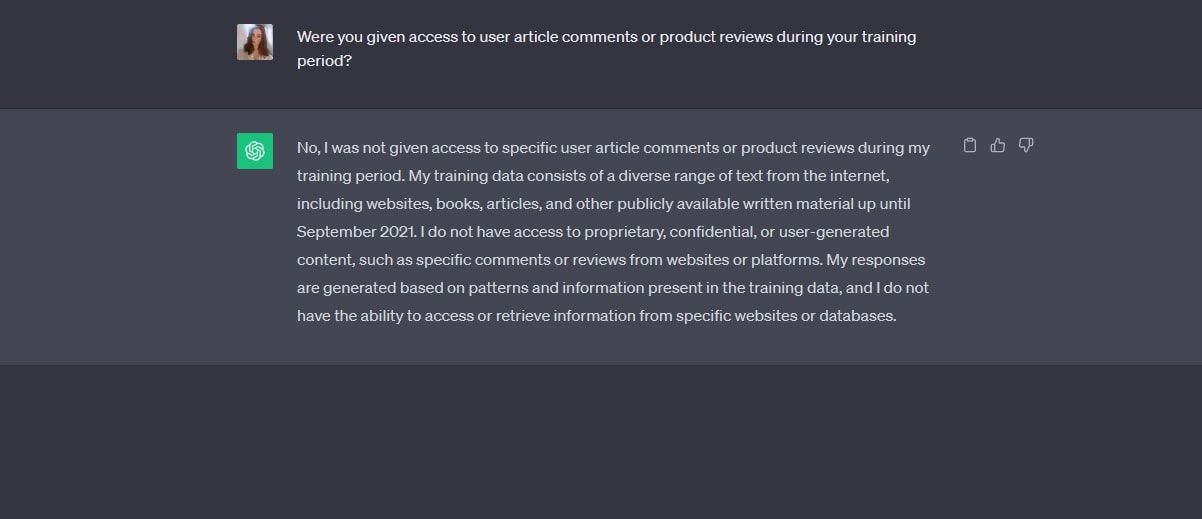

This is where things get a little blurry. If you ask ChatGPT-3.5 directly whether it has access to product reviews or article comments, you’ll get a firm negative. As you can see in the screenshot below, GPT-3.5 states that it wasn’t given access to user article comments or product reviews in its training.

Rather, it was trained using “a diverse range of text from the internet, including websites, books, articles, and other publicly available written material up until September 2021.”

But is the case the same for GPT-4?

When we asked GPT-4, we were told that “OpenAI did not use specific user reviews, personal data, or article comments” in the chatbot’s training period. Additionally, GPT-4 told us that its responses are generated from “patterns in the data [it] was trained on, which primarily consists of books, articles, and other text from the internet.”

When we probed further, GPT-4 claimed that certain social media content may, indeed, be included in its training data, but the creators will always remain anonymous. GPT-4 specifically stated that “Even if the content from platforms like Reddit was part of the training data, [it doesn’t] have access to specific comments, posts, or any data that can be linked back to an individual user.”

Another notable part of GPT-4’s response is as follows “OpenAI has not explicitly listed every data source used.” Of course, it would be tough for OpenAI to list 300 billion words’ worth of sources, but this does leave space for speculation.

In an Ars Technica article , it was stated that ChatGPT does collect “personal information obtained without consent.” In the same article, contextual integrity was mentioned, a concept that refers to only using someone’s information in the context it was initially used. If ChatGPT breaches this contextual integrity, people’s data could be at risk.

Another point of concern here is OpenAI’s compliancy with the General Data Protection Regulation (GDPR) . This is a regulation enforced by the European Union in order to protect citizens’ data. Various European countries, including Italy and Poland, have launched investigations into ChatGPT due to concerns around its GDPR compliance. For a short time, ChatGPT was even banned in Italy due to privacy worries.

OpenAI has threatened to pull out of the EU in the past due to planned AI regulations, but this has since been retracted.

ChatGPT may be the biggest AI chatbot today, but chatbot privacy issues don’t start and end with this provider. If you’re using a shady chatbot with a lackluster privacy policy, your conversations may be misused, or highly sensitive information may be used in its training data.

2. Data Theft

Like any online tool or platform, chatbots are vulnerable to cybercrime. Even if a chatbot did all it could to protect users and their data, there’s always a chance that a savvy hacker will manage to infiltrate its internal systems.

If a given chatbot service were storing your sensitive info, such as payment details for your premium subscription, contact data, or similar, this could be stolen and exploited if a cyberattack were to occur.

This is especially true if you’re using a less secure chatbot whose developers haven’t invested in adequate security protection. Not only could the company’s internal systems be hacked, but your own account stands the chance of being compromised if it doesn’t have login alerts or an authentication layer.

Now that AI chatbots are so popular, cybercriminals have naturally flocked to using this industry for their scams. Fake ChatGPT websites and plugins have been a major problem since OpenAI’s chatbot hit the mainstream in late 2022, with people falling for scams and giving away personal info under the guise of legitimacy and trust.

In March 2023, MUO reported on a fake ChatGPT Chrome extension stealing Facebook logins . The plugin could exploit a Facebook backdoor to hack high-profile accounts and steal user cookies. This is just one example of numerous phony ChatGPT services designed to con unknowing victims.

3. Malware Infection

If you’re using a shady chatbot without realizing it, you may find the chatbot providing you with links to malicious websites. Maybe the chatbot has alerted you of a tempting giveaway, or provided a source for one of its statements. If the operators of the service have illicit intentions, the entire point of the platform may be to spread malware and scams via malicious links.

Alternatively, hackers may compromise a legitimate chatbot service and use it to spread malware. If this chatbot happens to be very people, then thousands, or even millions of users will become exposed to this malware. Fake ChatGPT apps have even been on the Apple App Store , so it’s best to tread carefully.

In general, you should never click on any links a chatbot provides before running it through a link-checking website . This may seem irritating, but it’s always best to be sure that the site you’re being led to doesn’t have a malicious design.

Additionally, you should never install any chatbot plugins and extensions without verifying their legitimacy first. Do a little research around the app to see if it’s been well-reviewed, and also run a search of the app’s developer to see if you find anything shady.

Chatbots Aren’t Impervious to Privacy Issues

Like most online tools nowadays, chatbots have been repeatedly criticized for their possible security and privacy pitfalls. Whether it’s a chatbot provider cutting corners when it comes to user safety, or the ongoing risks of cyberattacks and scams, it’s crucial that you know what your chatbot service is collecting on you, and whether it’s employed adequate security measures.

Chatbots have been around for years, but the rise of large language models, such as ChatGPT and Google Bard, has given the chatbot industry a new lease of life.

MUO VIDEO OF THE DAY

SCROLL TO CONTINUE WITH CONTENT

Millions of people now use AI chatbots worldwide, but there are some important privacy risks and concerns to keep in mind if you want to try out one of these tools.

Also read:

- [New] YouTube vs Vimeo Exploring User Experience Variance

- A Detailed VPNa Fake GPS Location Free Review On Infinix Note 30 VIP | Dr.fone

- ChatGPT Usage: Balancing Progress and Security

- ChatGPT's Role in Shaping Digital Identity Safety

- Discover the Brilliance of Claude: Next-Gen AI for Your Enterprise

- Discover the Power of GPT-3's Latest Browsing Updates

- Exhaustive Fix List for Windows 7 Microphone Not Working Issues

- Free Online MP4 to FLAC Audio Conversion with Movavi's Easy Tool

- From Source to System: Your Auto-GPT Walkthrough

- How to Transfer Text Messages from Samsung Galaxy M34 to New Phone | Dr.fone

- How to use Pokemon Go Joystick on Sony Xperia 5 V? | Dr.fone

- In 2024, 2 Ways to Transfer Text Messages from Samsung Galaxy XCover 6 Pro Tactical Edition to iPhone 15/14/13/12/11/X/8/ | Dr.fone

- Integrate Smartly: ChatGPT and Its Plugins Guide

- Restoring Functionality: How To Replace Lost or Damaged Keys On Your Laptop

- Secure Browsing with GPT: Extensions or Risks?

- Title: Privacy Paradox: Decoding the Top 3 Bot Security Warnings

- Author: Brian

- Created at : 2024-10-02 17:09:34

- Updated at : 2024-10-03 22:55:14

- Link: https://tech-savvy.techidaily.com/privacy-paradox-decoding-the-top-3-bot-security-warnings/

- License: This work is licensed under CC BY-NC-SA 4.0.